LLMs are getting scarily good at medical tests. Every few months there’s another headline about near‑perfect licensing-exam scores, or a demo that sounds like a doctor in your pocket. But here’s the uncomfortable question: does that translate to better decisions for normal people at home—when they’re worried, underslept, and not sure what details matter?

A preregistered randomized study in Nature Medicine tackled that question directly, and the results are… sobering. The models did fine. The people didn’t do better with the models. In some ways, they did worse.

What the researchers actually tested

The team recruited 1,298 adults in the UK and gave them 10 medically curated scenarios—the kind of “what would you do right now?” situations people face at home. Participants had to do two things: choose a next step on a five-point disposition scale (self-care → call an ambulance), and list the conditions they were considering.

Then the key move: participants were randomized into four groups.

- One group used GPT‑4o

- One used Llama 3

- One used Command R+

- And a control group used whatever they’d normally use at home (which, in practice, often means web search and trusted sites).

The scenarios and scoring weren’t “vibes-based,” either. Three physicians drafted the scenarios and agreed unanimously on the best disposition; four additional physicians generated differential diagnoses and “red flag” conditions used for scoring.

Why does that matter? Because a lot of “LLM in healthcare” evaluation is still basically: Here’s a question; here’s the answer; score it. This paper is closer to the real world: a layperson trying to explain symptoms, interpret suggestions, and decide what to do.

The part that surprised everyone: the models were decent… and still didn’t help

When the models were tested on their own—given the full scenario and asked to respond directly—they looked pretty strong:

- They identified at least one relevant condition 90.8%–99.2% of the time (depending on model).

- They chose the correct disposition 48.8%–64.7% of the time.

That’s not perfect, but it’s clearly above chance.

Now comes the punchline.

When real people used those same models, performance fell off a cliff:

- Participants using LLMs identified relevant conditions in fewer than 34.5% of cases—and the control group was significantly better at naming at least one relevant condition.

- For disposition accuracy, LLM users were not statistically better than control; correct dispositions were under 44.2%.

- The control group had 1.76× higher odds of identifying a relevant condition than participants using LLMs overall, and was 1.57× more likely to identify “red flag” conditions.

If you’re building patient-facing tools, it’s hard not to wince at that.

Because it’s not saying “LLMs know nothing.” It’s saying something more operationally painful:

A capable model does not automatically become helpful assistance once you put it in front of real users.

So what broke? Mostly the interaction, not the knowledge

Reading the results, I kept thinking: this is what clinicians already know. People don’t walk into an appointment and give a perfect history. Clinicians pull the story out with a structured interview.

This study basically shows what happens when you remove that structure.

A few breakdowns stood out:

1) People didn’t know what details to include

In a manual review of transcripts (a sample of 30 interactions), the researchers found that initial user messages were often missing key information—in 16 of the 30 cases reviewed. Sometimes people added details later, but the starting point mattered, because it steered the conversation.

2) The models were less reliable in dialogue than in “model-alone” mode

Across all transcripts, the models mentioned at least one relevant condition 65.7%–73.2% of the time during conversations—noticeably lower than their performance when given the full scenario upfront. That suggests a simple truth: the conversation often didn’t contain the right clinical picture.

3) Even when the model said the right thing, users didn’t necessarily use it

The paper shows that correct conditions can appear in the chat and still not show up in the participant’s final answer. That’s not a “knowledge” problem. That’s a comprehension + trust + attention problem.

4) Too many possibilities, not enough signal

On average, the LLMs surfaced 2.21 possible conditions per interaction, but only 34.0% of those were correct. Participants’ final lists were only slightly better at 38.7% precision. In human terms: if you throw a grab-bag of diagnoses at someone who’s already anxious, you haven’t helped—you’ve handed them homework.

The quiet bombshell: benchmarks didn’t predict this

This is the part I hope developers and regulators sit with. The authors compared interactive performance to two common evaluation shortcuts:

- Medical QA benchmarks (exam-style questions): The models did well on structured Q&A, but that performance was largely uncorrelated with how people performed when using the model in interactive scenarios.

- Simulated patients: They also tried replacing humans with an LLM-simulated user. The simulation results looked better and less variable than real humans—and only weakly correlated with real-user outcomes.

Translation: if you “validate” a public-facing health assistant using only exam questions or synthetic users, you may miss the very failure modes that matter most in the wild: incomplete histories, misinterpretation, and trust gone sideways.

What I’d take from this if I were building a public health assistant

I don’t read this paper as “don’t use LLMs in healthcare.” I read it as: stop confusing medical knowledge with product safety.

A few practical implications feel pretty direct:

- Make it feel less like chat, more like a guided clinical interview: A free-form text box is not how medicine works. Tools should proactively ask targeted follow-ups, confirm key details, and force the conversation to collect the missing pieces before it offers advice.

- Triage first, diagnosis second: If you’re going to present multiple possibilities, rank by urgency and clearly highlight “must-not-miss” red flags. Otherwise you’ll produce the worst outcome: confident confusion.

- Design for trust calibration: The authors explicitly frame “human–LLM interaction failures” as the blocker, and call for more reliable, deterministic design and systematic testing with real users. That’s another way of saying: don’t just ship a model—ship guardrails, consistency checks, and UX that prevents over-trust.

- Test with real humans early (and keep testing): The paper ends with a clear recommendation: systematic human user testing should be required before public deployment for medical advice scenarios.

For clinicians: expect more AI-shaped narratives in the room

Millions of people already use chatbots for health questions. This paper suggests those “AI opinions” may be no better than what people can find via conventional resources—and may be worse at surfacing relevant conditions and red flags.

That doesn’t mean dismissing patients who bring AI output. It means we may need to get better at a new step in the encounter: unpacking what the patient asked, what the model said, and what the patient heard.

Closing thought

The takeaway isn’t “LLMs are bad at medicine.”

It’s that LLMs can be good at medicine and still fail as assistants—because assistance isn’t only about correctness. It’s about interaction: eliciting the right information, communicating uncertainty, and guiding attention toward what matters.

If we want patient-facing AI to be safe and useful, we have to evaluate it the way people actually use it: messy, contextual, and human.

Reference

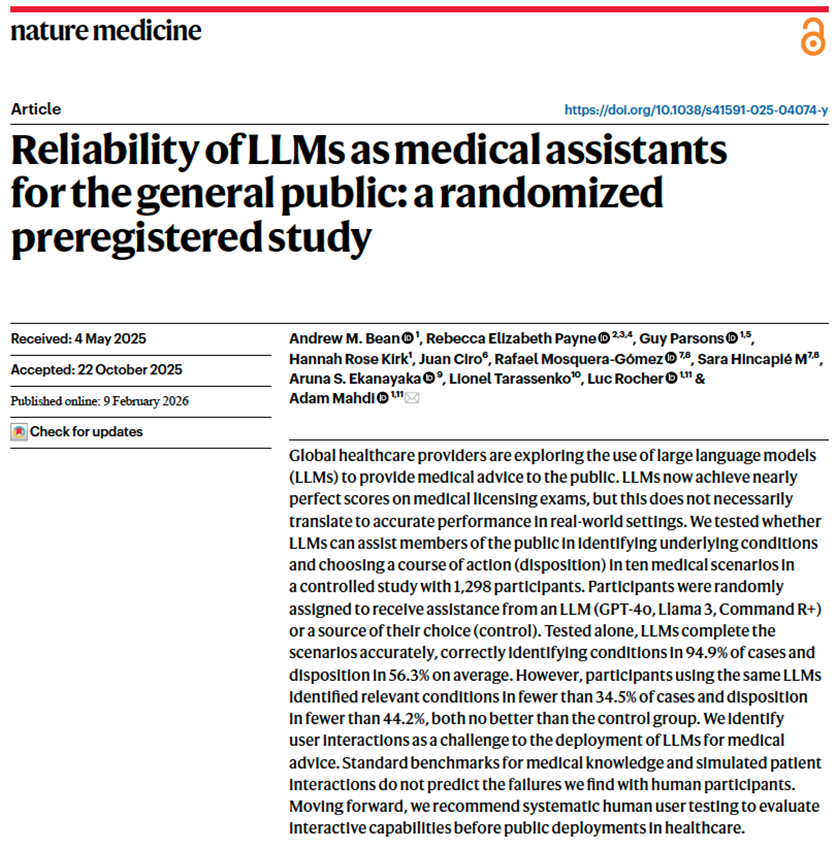

Bean AM et al. Reliability of LLMs as medical assistants for the general public: a randomized preregistered study. Nature Medicine, Feb 9, 2026. DOI: 10.1038/s41591-025-04074-y.

Disclaimer: This article is for informational purposes only and does not provide medical advice.