Artificial intelligence is gaining traction across many disciplines, and health economics and outcomes research (HEOR), real-world data (RWD), and medical affairs are no exception. To understand how professionals in these functions perceive, use, and what concerns they have about it, Polygon Health Analytics conducted a targeted survey in April 2026. What we have found out is that AI is already widely used across HEOR/RWD/medical affairs—but respondents primarily trust it as an accelerator, not an autonomous decision-maker, and they consistently emphasize the need for human oversight, especially for evidence-grade outputs.

Below is a practitioner-focused summary of what we learned, paired with practical takeaways for teams looking to adopt AI responsibly.

Survey snapshot: who responded?

A total of 133 HEOR, RWD, and medical affairs professionals completed the survey. Self-reported AI familiarity was predominantly basic to moderate, consistent with an end-user workforce still building AI fluency:

- AI experts: 4% (=5/133)

- Advanced understanding: 11% (=15/133)

- Moderate understanding: 24% (=32/133)

- Basic understanding: 52% (=69/133)

- Very limited understanding: 9% (=12/133)

To keep insights practical and decision-relevant, we place primary emphasis on findings from respondents with at least basic AI familiarity. Unless otherwise noted, the percentages reported below reflect the survey distributions as collected.

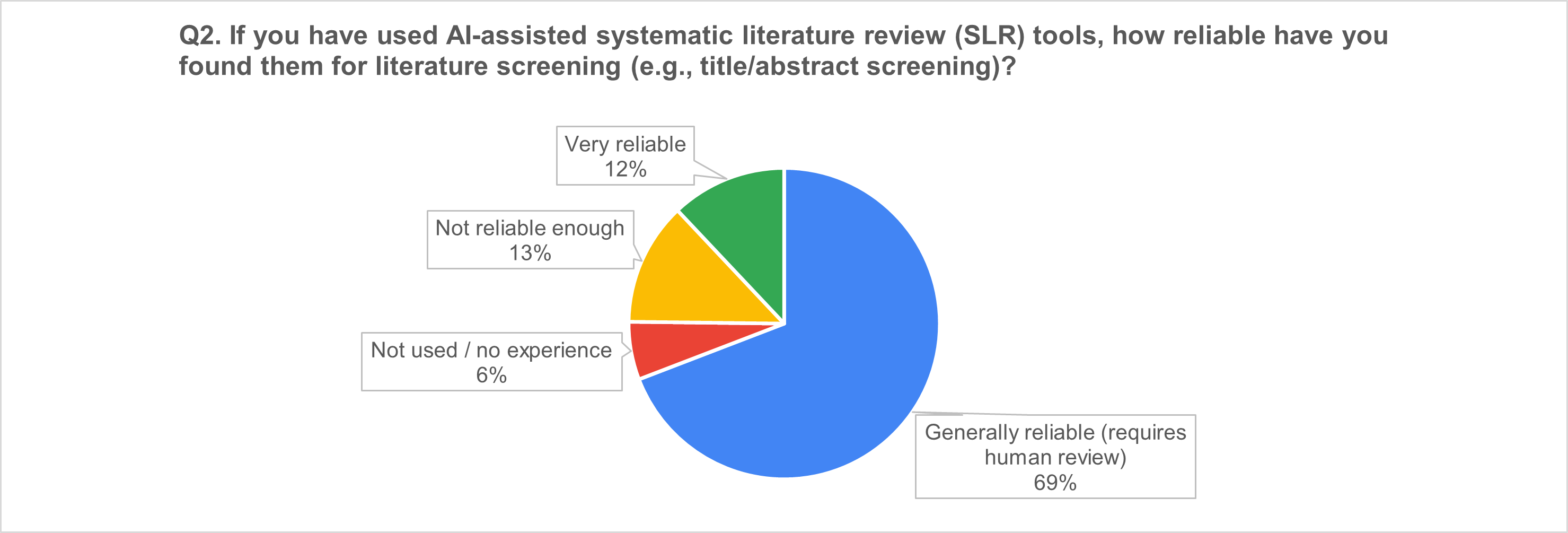

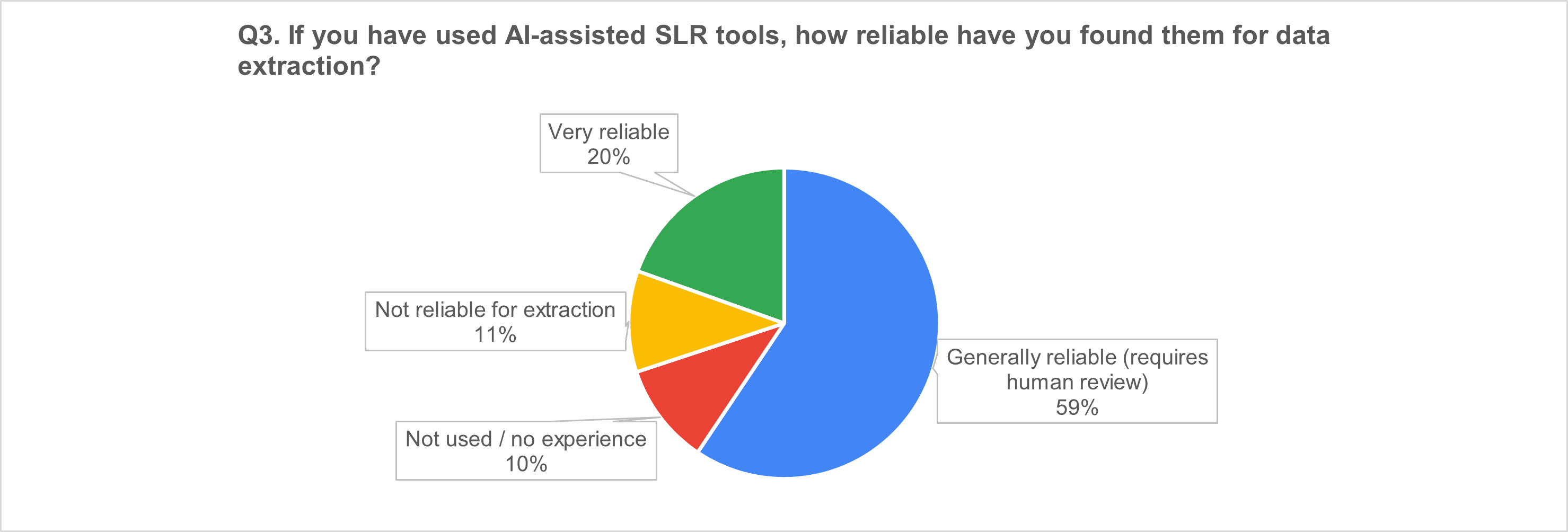

1) AI-assisted SLR tools: “useful accelerators,” not autopilot

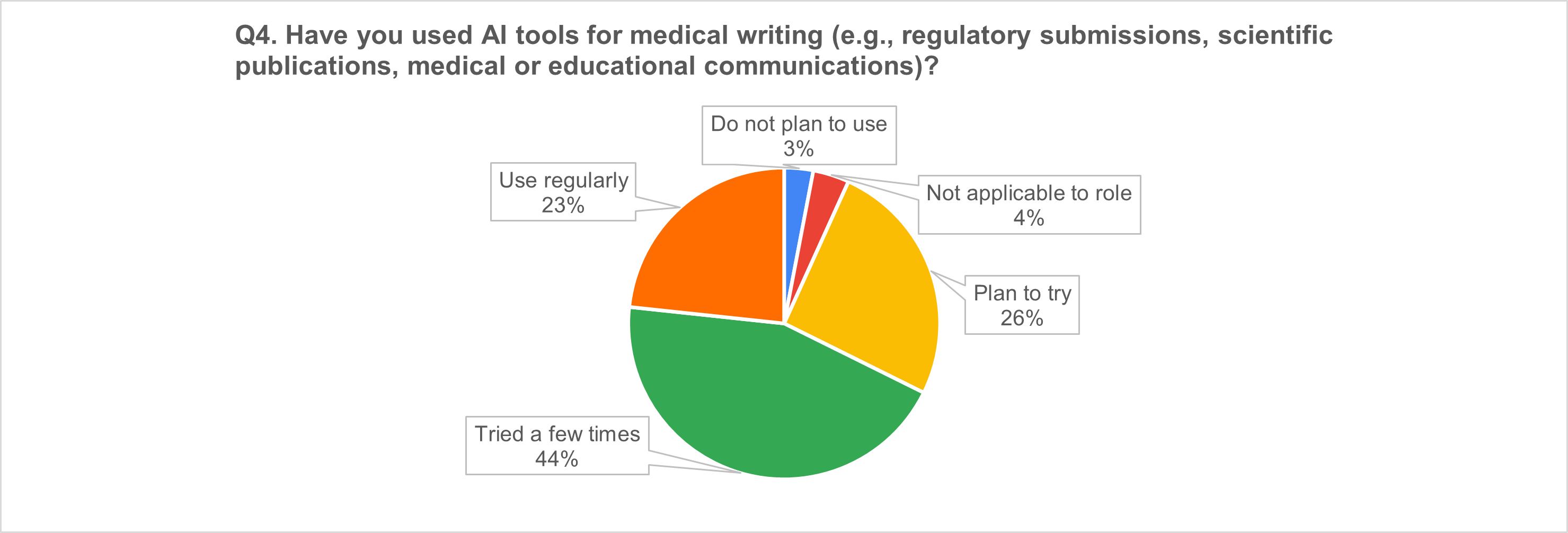

2) AI in medical writing: broad uptake and strong momentum

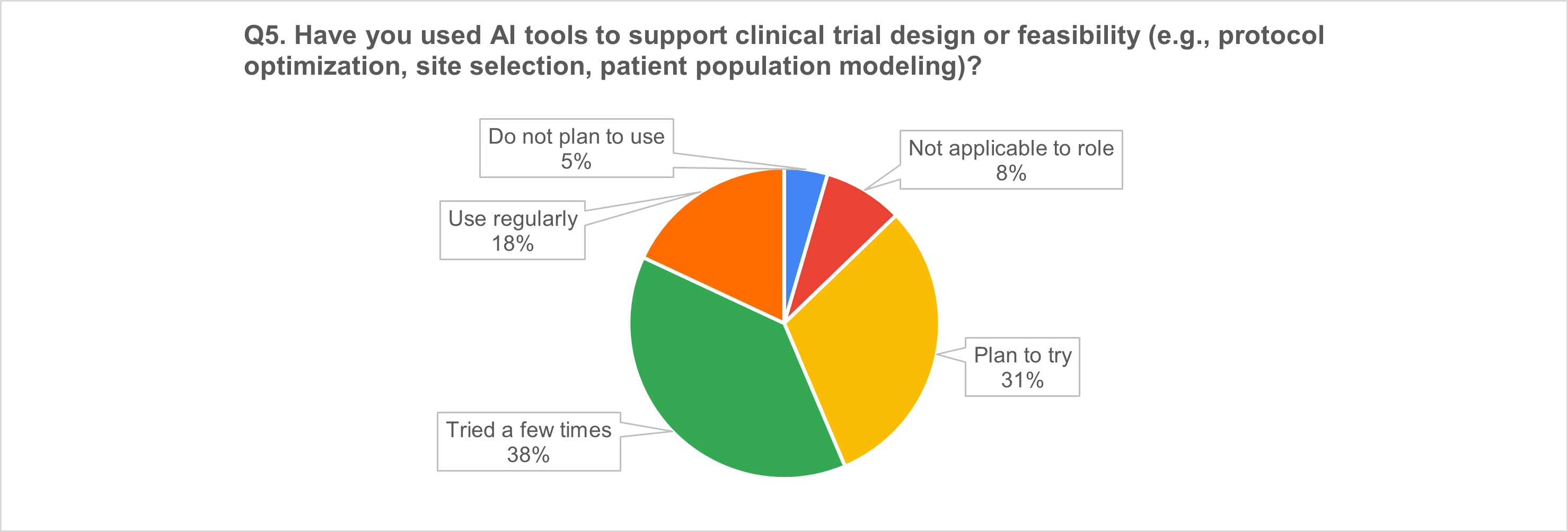

3) Trial design & feasibility: more than half already using AI

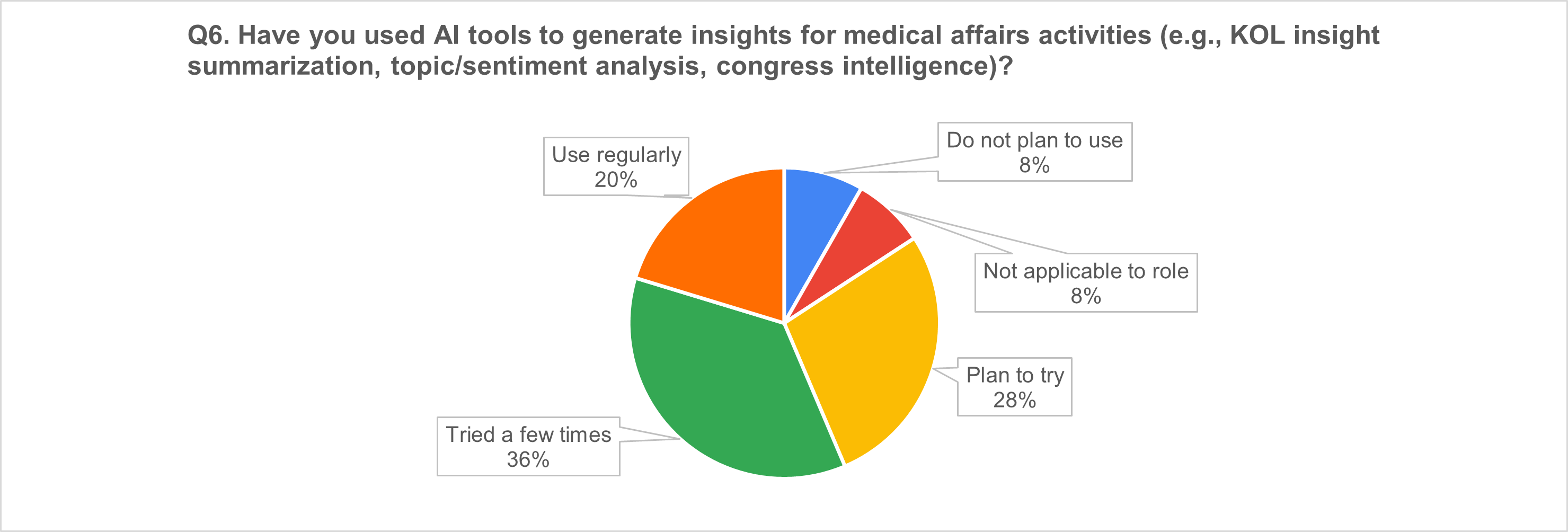

4) Medical affairs insights: strong use, still often “experimental”

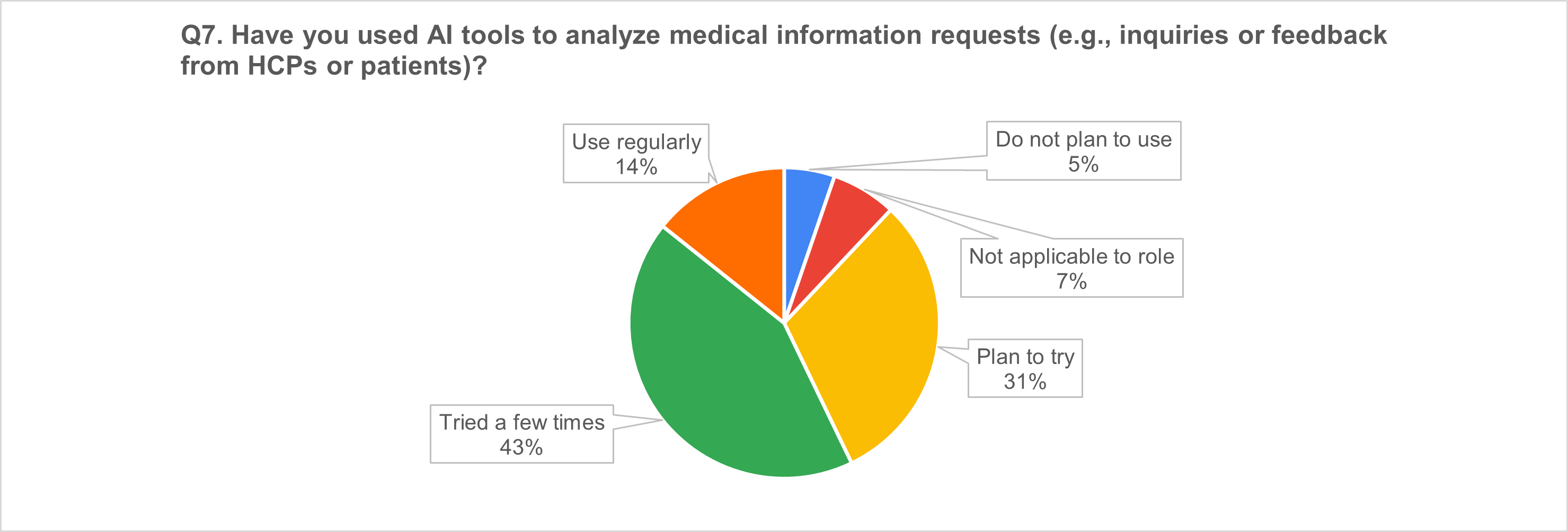

5) Medical information requests: a high-uptake use case

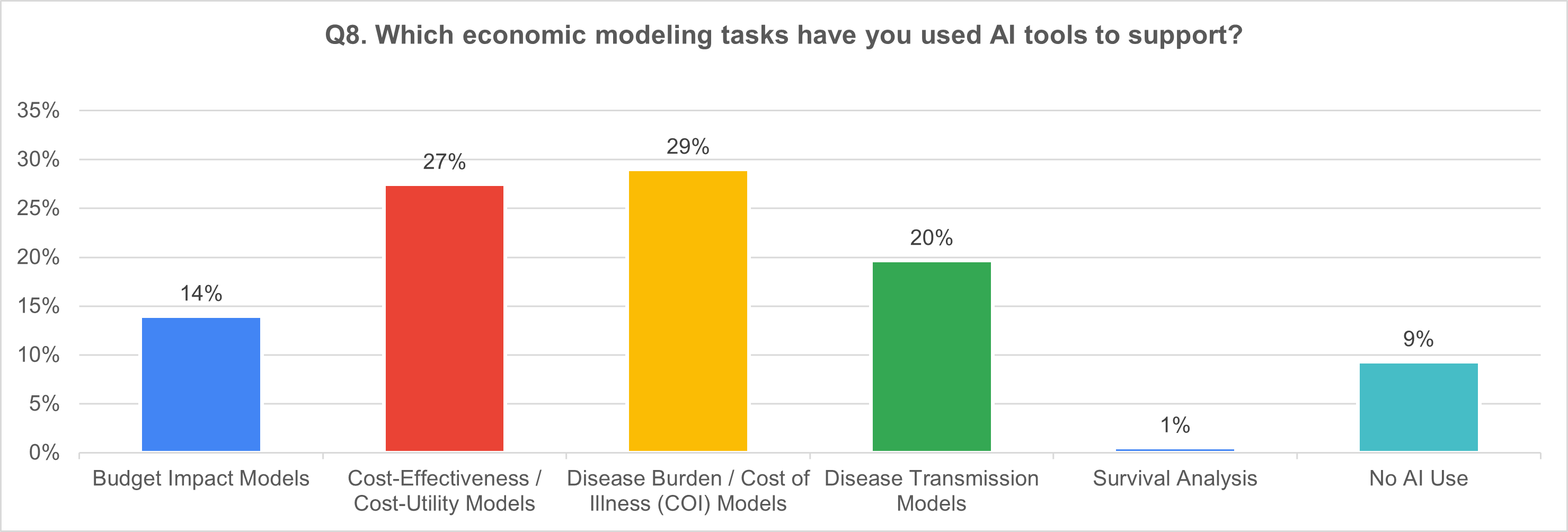

6) Economic modeling tasks supported by AI (multi-select; n = 121)

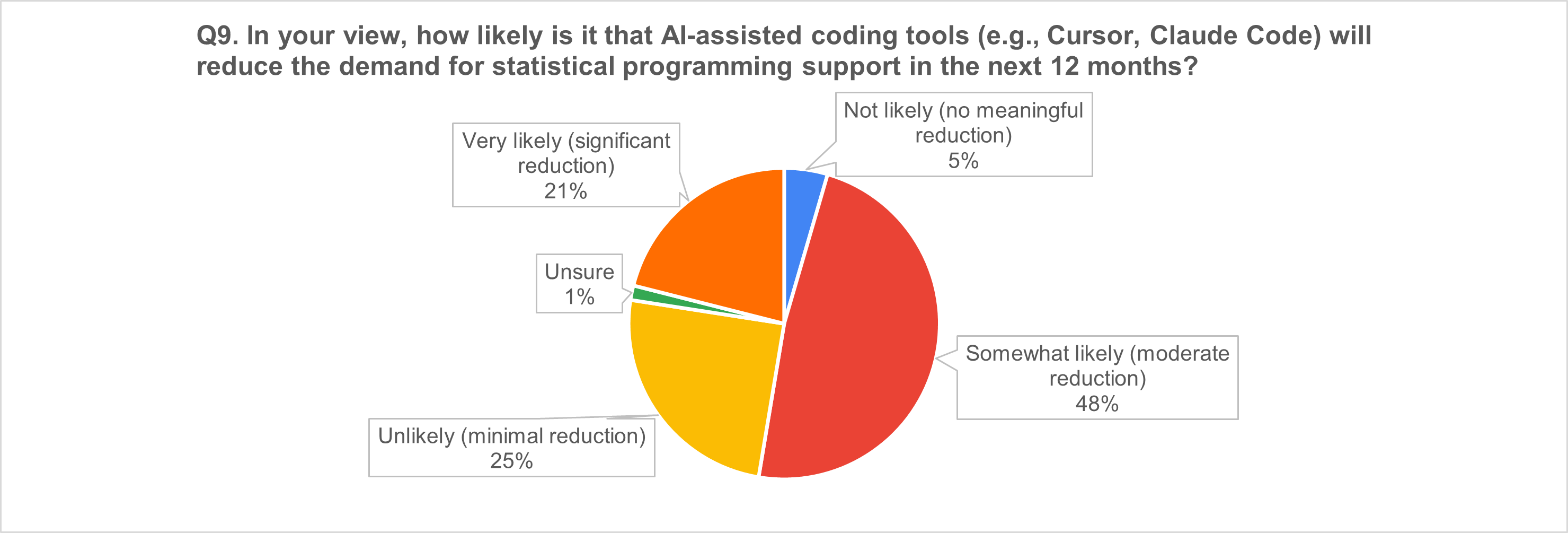

7) AI coding tools and the near-term workforce question

The “why” behind the numbers: respondents' top AI concerns (n = 42 free-text responses)

We asked respondents to describe the most important limitations/risks of AI in HEOR/medical affairs and the guardrails the industry should implement. Four themes dominated:

1) Data quality and representativeness

Respondents emphasized that HEOR/RWD data are often messy, incomplete, and biased; AI can amplify missingness and noise, producing misleading conclusions—especially for underrepresented subgroups.

Suggested guardrails: dataset quality standards, diversity audits, subgroup validation, and transparent reporting of population coverage.

2) “Black box” models and defensibility

Opacity undermines trust, reproducibility, and the ability to defend results—especially for regulatory and HTA audiences.

Suggested guardrails: explainable AI for high-stakes uses; interpretability documentation; version control, audit trails, and traceability.

3) Output validity and hallucinations

Respondents flagged fabricated or context-poor outputs and misuse of predictive models as causal evidence.

Suggested guardrails: mandatory human-in-the-loop QC; clear separation between exploratory vs decision-grade outputs; continuous monitoring and validation.

4) Privacy, confidentiality, and compliance

Handling sensitive patient data increases exposure to privacy risk and noncompliance.

Suggested guardrails: encryption, de-identification/anonymization, strict access controls, and routine audits.

A cross-cutting concern was over-reliance—the risk that AI substitutes for expertise, judgment, and the human elements of scientific exchange and trust-building.

What this means for HEOR/RWD/medical affairs teams

Based on the results, we believe the industry is converging on three practical realities:

- AI adoption is happening now—but trust remains conditional.

Professionals are willing to use AI to accelerate work, but they are not ready to delegate accountability to it. - Human review is not a weakness; it’s the operating model.

Across SLR, medical writing, and insight generation, respondents repeatedly framed AI as reliable when paired with human oversight. - The near future is about workflow redesign, instead of job replacement.

Even where respondents expect coding tools to reduce demand for programming support, the dominant expectation is moderate reduction, consistent with augmentation and productivity shifts more than displacement.

Practical guardrails you can implement today

If you’re adopting AI in HEOR/RWD/medical affairs, consider this baseline governance package:

- Define output tiers: exploratory vs decision-grade outputs (and the QC required for each).

- Establish QC standards: human review requirements, sampling plans, and error thresholds.

- Data governance: provenance tracking, representativeness checks, subgroup validation.

- Transparency: model documentation, prompt/version control, audit trails.

- Privacy & security: de-identification, access controls, monitoring.

- Training: educate teams on limitations, bias, and appropriate interpretation.

- Accountability: ensure responsibility stays with qualified professionals—not tools.

Want to benchmark your organization?

If your team is exploring AI adoption in HEOR, RWD, or medical affairs—and wants to compare internal practices against industry sentiment—Polygon Health Analytics can help you:

- evaluate where AI adds value safely,

- design guardrails and QC workflows, and

- prioritize use cases with the best signal-to-risk ratio.

Contact us if you’d like a short benchmarking template or a one-page governance checklist tailored to your use case.