“People should stop training radiologists now.” — Geoffrey Hinton (2016; he later conceded the timeline was wrong)

“Within 10 years, AI will replace many doctors…” — Bill Gates, The Tonight Show, Feb 2025

“Humanoid robots will outperform top surgeons within 3–5 years.” — Elon Musk (2025–2026 interviews)

“AI may one day take over many duties traditionally performed by doctors.” — Demis Hassabis, CEO of Google DeepMind, Wired interview, August 2025

I’ve just returned from USCAP, where I spoke with practicing pathologists across academic centers, community hospitals, and reference labs. Their message was remarkably consistent:

Pathologists welcome AI as an assistant that can improve efficiency and productivity—but they do not believe they will be replaced anytime soon.

Here is the reality they shared—and why AI’s impact in pathology will likely be evolutionary, not revolutionary.

Ten Reasons Pathologists Aren’t Being Replaced (Any Time Soon)

1) Variability matters: “reading slides” is not plug‑and‑play

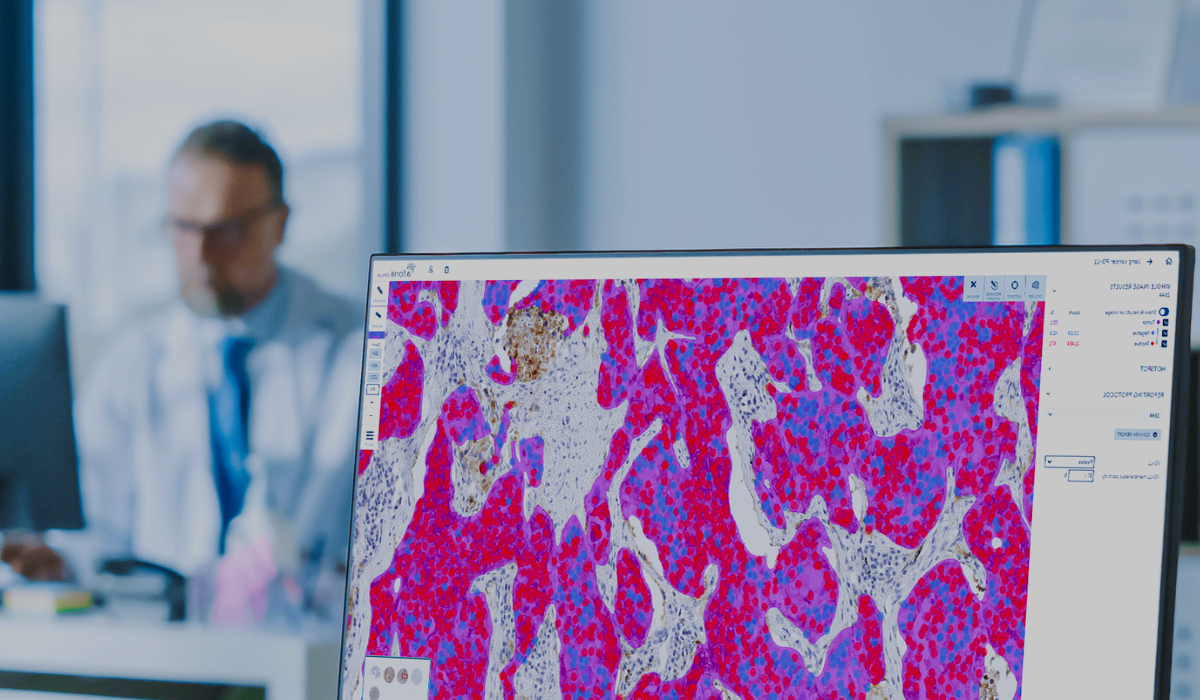

If we focus only on “reading slides,” deploying AI in pathology is far more difficult than many expect. In principle, pathology involves sampling, staining, slide preparation, and microscopic examination. In practice, every step varies. Blood samples and bone samples require different preparation. Hospitals use different staining protocols, section thicknesses, scanner brands (Leica, Philips, Hamamatsu), and lighting conditions.

A model trained at one hospital may perform poorly at another. This is not an algorithm problem—it is the physical reality of medical imaging. There is no single, universal AI model that can handle all of this variability.

2) The “gold standard” isn’t binary

We often call the pathology report the gold standard for diagnosis. From an AI or computer‑science perspective, a gold standard should be clear‑cut. In reality, pathology is full of uncertainty.

Take B‑cell lymphoma as an example. There are multiple diagnostic approaches, each with different sensitivity, specificity, cost, and complexity. In many cases, the final diagnosis still depends heavily on the pathologist’s experience and judgment.

3) You can’t use AI on glass

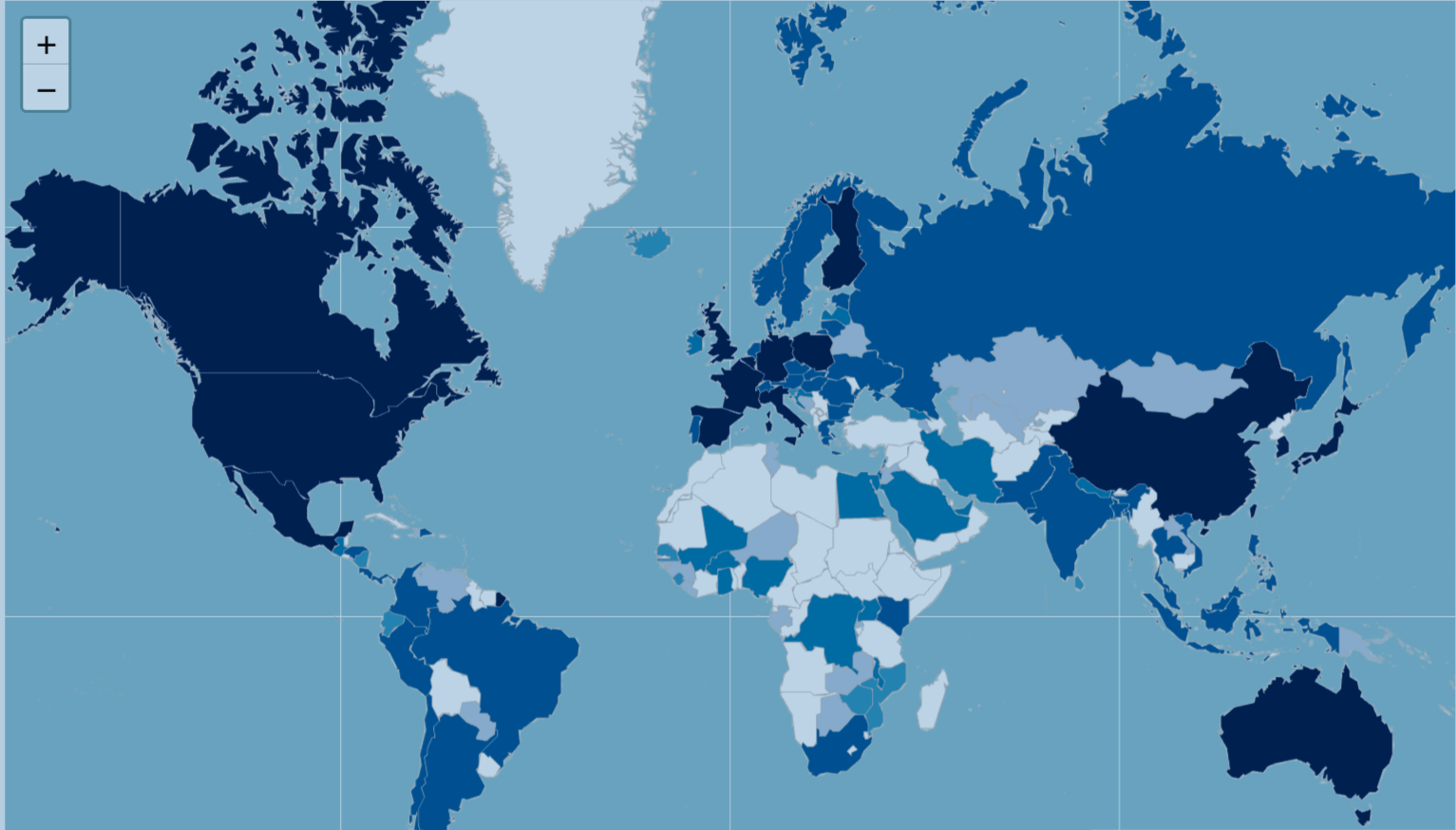

From a logistics standpoint, only about 10% of pathology workflows in the United States are fully digitized. Slide scanners range from roughly $2,000 to over $100,000, depending on speed, capacity, and software.

If pathologists are still reviewing slides under traditional microscopes and writing reports that way, the basic prerequisites for AI deployment simply do not exist.

4) Regulatory reality: platforms > “push‑button diagnosis”

Recent FDA actions show how AI is actually entering clinical practice:

- Paige Prostate (2021) — the first FDA‑authorized AI in pathology, used as an adjunct to flag suspicious regions on prostate biopsy slides

- PathAI AISight® Dx (2025) — FDA 510(k) clearance for primary diagnosis as a digital pathology image‑management platform (IMS), with a Predetermined Change Control Plan (PCCP)

- Indica Labs HALO AP Dx (2025) — FDA‑cleared enterprise digital pathology platform supporting SVS and DICOM

The pattern is clear: platforms that enable digital workflows + adjunct AI tools, not fully autonomous diagnosis.

5) Pathology has a long tail of subspecialties

Pathology is highly fragmented—dermatopathology, gynecologic pathology, hematopathology, GI pathology, and more. Each narrow indication requires its own model, validation, and regulatory pathway.

Paige’s first FDA clearance focused solely on prostate cancer. Every additional disease area requires new data, new evidence, and new approvals.

6) Clinicians want explainable, improvable models—not frozen black boxes

Most cleared pathology AI models today are locked and non‑adaptive, largely due to regulatory constraints. That makes pathologists uncomfortable.

What happens if the training data has errors? What if clinicians discover systematic mistakes in the model’s predictions but have no way to correct or improve it? From a medical and scientific standpoint, that approach feels fundamentally unscientific.

7) High sensitivity does not equal safe delegation

Many pathology AI models claim ~90% sensitivity, which sounds impressive. But consider the clinical reality: if a pathologist reads 10 cases a day and AI misses one, the liability risk remains with the physician.

Worse, the pathologist doesn’t know which case was missed—so all 10 cases still require a full, careful review. In that scenario, how much time or risk does AI really reduce?

8) Data gravity is real

A single whole‑slide image often averages ~1.3 GB, and complex cases can involve 20–30 slides—tens of gigabytes per patient.

At scale, network bandwidth, storage costs, and image‑streaming performance become real bottlenecks without serious infrastructure investment.

9) The business case is still evolving

Hospital leaders must balance platform licenses, per‑slide or per‑seat fees, scanner capital costs, validation and change‑management overhead, and long‑term storage.

Even mid‑range scanners cost tens of thousands of dollars, before accounting for software, services, and IT infrastructure. Most institutions are understandably cautious.

10) Reading slides is only one part of the job

Pathologists do far more than read slides. They integrate clinical history, lab results, radiology imaging, molecular diagnostics, and immunohistochemistry; consult with clinicians; participate in tumor boards; and perform rapid intraoperative frozen‑section diagnoses in the operating room.

Any AI system that hopes to make real impact must be deeply integrated into this broader clinical workflow—not treated as a standalone tool.

Bottom line

AI will absolutely reshape pathology—but not by replacing pathologists. The future is human‑in‑the‑loop, where AI augments expertise, improves consistency, and helps manage growing workloads.

The gap between AI hype and clinical reality is still wide—and understanding that gap is essential if we want AI to actually improve patient care.