Artificial intelligence (AI), machine learning (ML), deep learning (DL), and natural language processing (NLP) are hard-to-avoid buzzwords at professional conferences, scientific journals, technological news, social media, and, of course, any vendor’s marketing pitch. Many enthusiasts have even made the splash in media that AI is going to revolutionize everything and in healthcare, those state-of-the-art AI technologies are going to find the cure for cancer using it tomorrow!

Unfortunately, most organizations, if not all, in healthcare delivery, life sciences, education, insurance, and many other long-existing industries struggle through proof-of-concepts (PoCs) and deploy AI models to production. AI models depend heavily on high-quality, diverse, dynamic, and unbiased data, whereas in most cases, data is still locked in silos, inaccessible, poorly structured, and most importantly, not organized in such a way as to be used as the fuel that makes AI work.

According to an IDC survey conducted in US and Canada in 2018 among 405 IT and data professionals (see Figure 2) who are involved in AI deployment in their organizations, the top challenges deploying AI at their work are data volume and quality, advanced data management, and skills gap. The survey results still remain valid today.

Based on knowledge gained from 20 years of experience in large organizations researching and developing data analytics and AI solutions, I have prepared the following checklist to guide your AI deployment. This checklist can help any non-computer technology companies, particularly those in healthcare ecosystem to avoid common traps during PoC projects (yes, there are many), and generate real business impact, when trying out new AI solutions for first time.

- What data elements did you capture in your past operations? Are they enough to answer the question asked? Did you miss any key data elements necessary for the AI algorithm to predict with high fidelity? How many instances (e.g., historical events) have you accumulated, to simulate various real-life situations that you want the AI model to predict? Are those data in a format readily processable by computer? Answers to these questions, will largely decide the AI solution’s performance.

- Most powerful AI solutions utilize supervised learning. Therefore pre-labeled or annotated data are required to train a model (similar to how we teach a baby to learn apple, orange, and pear). Do you have the time and budget to have someone label/annotate the data if they are not available yet? I need to caution you that this step is often associated with jaw-dropping cost. Data annotation has become an emerging business area and there are many tech companies including Scale AI, V7, SuperAnnotate, LabelBox, DataLoop, CVAT, QCraft, Parallel Domain, Superb AI… they are very experienced with image and video annotation. Natural language labeling and annotation are more complicated. Medical language (e.g., clinical notes from EHR system) labeling and annotation require domain experts and can be cost prohibitive.

- How tolerant are you regarding prediction errors? Recommending a book to an online shopper, self-driving car, and AI-suggested diagnosis and treatment for sure have different levels of tolerance for computer errors. Engaging the business stakeholder and setting the right expectations from the beginning of the AI project is critical.

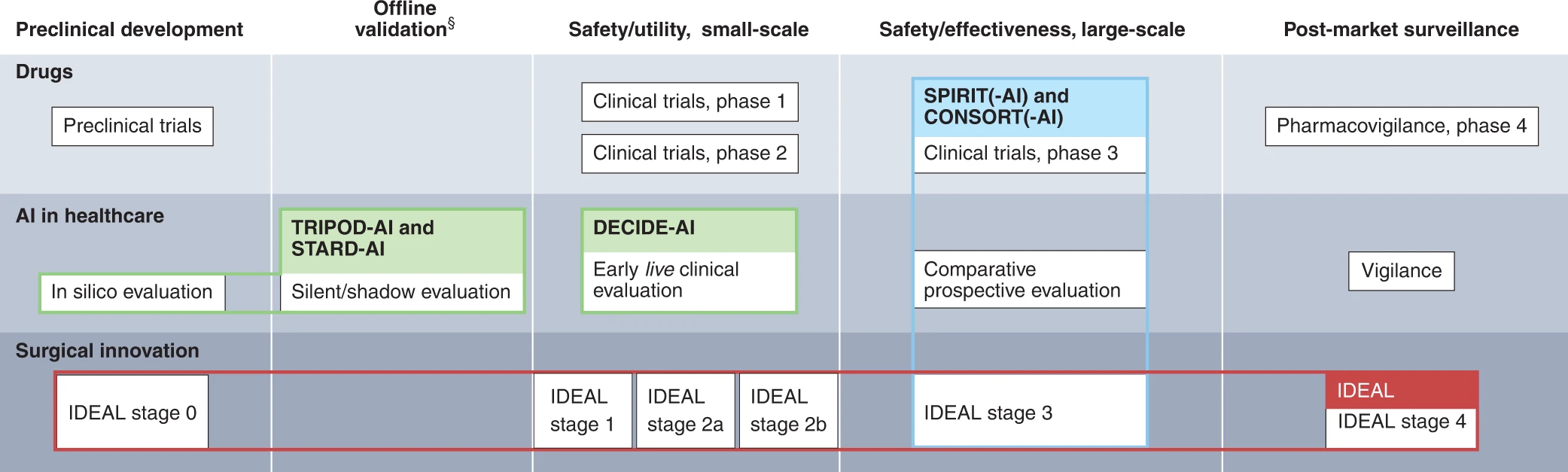

- Evaluation, evaluation, and evaluation! Most business stakeholders interested in AI technologies face a dilemma as they do not understand the technical details of AI/ML algorithms and models. If you think the difficulty of remodeling a kitchen for the first time is 5 (complying with building codes, choosing materials and contractor, budget management, etc), conducting a PoC AI project is 5 times as difficult and deploying an AI solution is 100-500 times more challenging. The AI field still feels like the wild west in the 1800s, with all the traps with few standards and regulations. If you rely on the developer/vendor to evaluate, they can cherry-pick and show you wonderful results, which would not hold in reality. (See the recent Nature article1 titled The reproducibility issues that haunt health-care AI) However, you can conduct your own independent evaluations to examine the final deliverables of any AI projects, by using some withheld data that the AI model has never seen. This does not guarantee finding all the biases and caveats of the models, but helps to reduce risks significantly. There are detailed guidelines for reporting and evaluating AI applications in biomedicine2 as shown in Figure 3.

Figure 3: Published reporting guidelines for AI applications in drug therapies, healthcare practice and surgical innovation. The colored lines represent reporting guidelines. TRIPOD-AI, STARD-AI, SPIRIT/CONSORT and SPIRIT/CONSORT-AI are study design specific; DECIDE-AI and IDEAL are stage specific. Depending on the context, more than one study design can be appropriate for each stage.

Figure 3: Published reporting guidelines for AI applications in drug therapies, healthcare practice and surgical innovation. The colored lines represent reporting guidelines. TRIPOD-AI, STARD-AI, SPIRIT/CONSORT and SPIRIT/CONSORT-AI are study design specific; DECIDE-AI and IDEAL are stage specific. Depending on the context, more than one study design can be appropriate for each stage. - Building a data culture in your organization is important. Building the right data infrastructure and successfully deploying AI solutions require both top leaders’ commitment and real experienced talents. A mindset acknowledging both real business impact and innovation is necessary. Too many well-intentioned AI or big data initiatives failed to survive after the PoC phase due to insufficient level of buy-in, both at the executive leadership level and among the wider workforce. Is there only verbal support or serious investment from top leaders? Is there a real data-driven decision-making process? Are there truly competent talents who understand both business process and technology? Interestingly, I repeatedly found that top-notch doctors and computer scientists do not guarantee successful AI deployment. Communication and collaboration are key to removing the technical barriers which derail successful deployment. A leader with enough knowledge in both areas will be necessary lead cohesive multi-functional team toward success.

Last but not least, I would like to say that the sign of true greatness in infrastructure goes unnoticed. Most of us only notice when we have a bad connection to make a call in the mountains, or when we are stuck at an airport during the holidays due to a Federal Aviation Administration (FAA) system failure. Secondly, infrastructure is an expense item on any organization’s Profit & Loss statement. Which department is going to advocate for it and which department is going to pick up the cost? Do they have strong voices in the organization? How long does it take to see the return on investment? Without deep understanding and proper solutions to these organizational, psychological, and cultural challenges; most AI initiatives will unlikely break out of the proof-of-concept purgatory.

References:

- TSIMA, K. “The reproducibility issues that haunt health-care AI.” Nature 613 (2023).

- Vasey, B., Nagendran, M., Campbell, B. et al. Reporting guideline for the early-stage clinical evaluation of decision support systems driven by artificial intelligence: DECIDE-AI. Nat Med 28, 924–933 (2022).

0 Comments